The headline prediction for the June 2017 election was not accurate. The final prediction was for a Conservative majority government with a majority of 66. The actual result was that the Conservatives were short eight seats of a majority.

In numerical terms, the prediction and the outcome were:

| Party | 2015 Votes | 2015 Seats | Pred Votes | Pred Seats | Actual Votes | Actual Seats | Vote Error | Seat Error | ||

|---|---|---|---|---|---|---|---|---|---|---|

| CON | 37.8% | 331 | 44.3% | 358 | 43.5% | 318 | −0.8% | −40 | ||

| LAB | 31.2% | 232 | 35.3% | 218 | 41.0% | 262 | +5.7% | +44 | ||

| LIB | 8.1% | 8 | 7.9% | 3 | 7.6% | 12 | −0.3% | +9 | ||

| UKIP | 12.9% | 1 | 4.3% | 0 | 1.9% | 0 | −2.4% | 0 | ||

| Green | 3.8% | 1 | 2.0% | 1 | 1.7% | 1 | −0.3% | 0 | ||

| SNP | 4.9% | 56 | 4.1% | 49 | 3.1% | 35 | −1.0% | −14 | ||

| Plaid | 0.6% | 3 | 0.6% | 3 | 0.5% | 4 | −0.1% | +1 | ||

| MIN | 0.8% | 18 | 1.6% | 18 | 0.7% | 18 | −0.9% | 0 |

Labour's support was significantly underestimated, which caused the number of Labour seats also to be underestimated. Although the Conservative support figure was quite accurate, the error in the Labour support caused the predicted number of Conservative seats to be too high. The Liberal Democrats were also underestimated, but the prediction was accurate in saying that they would lose votes overall.

In Scotland, the prediction was correct that the SNP had passed its peak and would lose seats, mostly to the Conservatives. But the prediction did not foresee the full extent of the SNP decline. The predictions for the smaller parties were relatively good, with Plaid Cymru, UKIP and the Greens all predicted fairly accurately.

In total sixty-nine seats were mis-predicted. This would be an acceptable result if the general trend had been right, but the overall prediction quality was poor at this election. This was mostly due to polling error in the pre-election opinion polls.

We will now look at these and other issues in more detail. The particular topics studied are:

The final prediction involved not just a central forecast, but also confidence bounds which the result should, with a 90pc confidence level, lie between. The confidence bounds given were:

| Party | Low Seats | Central | High Seats | Actual Seats |

|---|---|---|---|---|

| CON | 314 | 358 | 418 | 318 |

| LAB | 166 | 218 | 269 | 262 |

| LIB | 0 | 3 | 8 | 12 |

| UKIP | 0 | 0 | 0 | 0 |

| Green | 0 | 1 | 1 | 1 |

| SNP | 34 | 49 | 55 | 35 |

| PC | 0 | 3 | 3 | 4 |

| N Ire | 18 | 18 | 18 | 18 |

The actual result was not close to the central forecast, but it was (mostly) inside the confidence bounds. The bounds were sufficient for all the parties, except for the Liberal Democrats who won four more seats than their upper bound despite losing vote share, and Plaid Cymru who got one more seat.

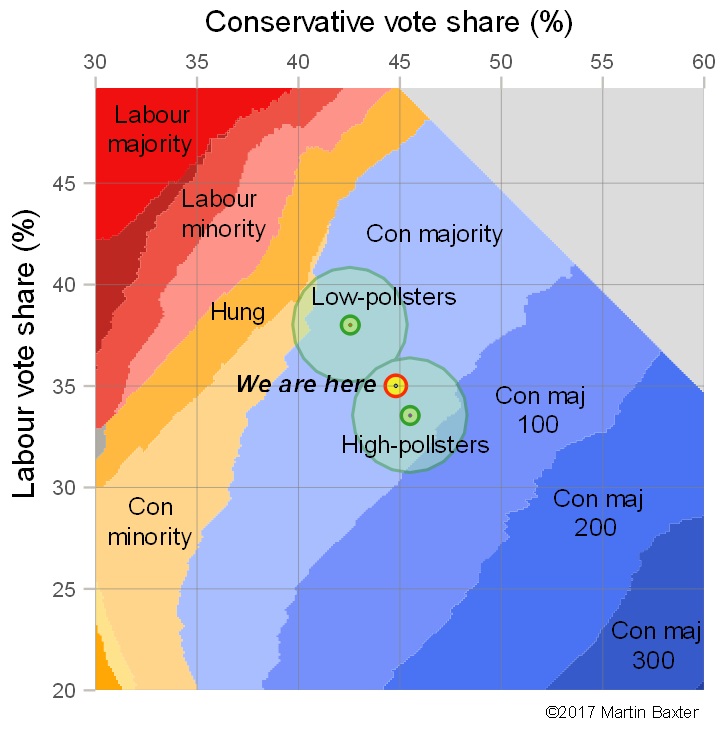

The confidence bounds were also shown in the final pre-election battleground graphic. The "Low-pollsters" were closer to the right answer than the "High-pollsters" and the "confidence circle" around them does include a sliver of "Conservative minority" territory. It was around this location that the final result materialised.

In summary, the confidence bounds worked fairly effectively and helped communicate in advance the relatively high degree of uncertainty about the result.

To make our prediction, we used an average of the final campaign polls taken by recognised polling organisations (members of the British Polling Council), plus a manual adjustment to correct for historic poll bias. This adjustment was defined as half the average error of polls over the last twenty years and was calculated as a swing of 1.1pc from Labour to Conservative.

| Pollster | Sample dates | Sample size | CON% | LAB% | LIB% | UKIP% | Green% | Error% |

|---|---|---|---|---|---|---|---|---|

| Opinium | 04 Jun 2017 - 06 Jun 2017 | 3,002 | 43 | 36 | 8 | 5 | 2 | 9.3 |

| Kantar Public | 01 Jun 2017 - 07 Jun 2017 | 2,159 | 43 | 38 | 7 | 4 | 2 | 6.5 |

| Panelbase | 02 Jun 2017 - 07 Jun 2017 | 3,018 | 44 | 36 | 7 | 5 | 2 | 9.5 |

| ComRes/Independent | 05 Jun 2017 - 07 Jun 2017 | 2,051 | 44 | 34 | 9 | 5 | 2 | 12.3 |

| YouGov/The Times | 05 Jun 2017 - 07 Jun 2017 | 2,130 | 42 | 35 | 10 | 5 | 2 | 13.3 |

| ICM/The Guardian | 06 Jun 2017 - 07 Jun 2017 | 1,532 | 46 | 34 | 7 | 5 | 2 | 13.5 |

| Ipsos-MORI/Evening Standard | 06 Jun 2017 - 07 Jun 2017 | 1,291 | 44 | 36 | 7 | 4 | 2 | 8.5 |

| Survation | 06 Jun 2017 - 07 Jun 2017 | 2,798 | 41 | 40 | 8 | 2 | 2 | 4.3 |

| Poll Average | 04 Jun 2017 - 07 Jun 2017 | 17,981 | 43.2 | 36.4 | 7.9 | 4.3 | 2.0 | 7.9 |

| Poll Bias correction | +1.1 | −1.1 | ||||||

| Total Average | 04 Jun 2017 - 07 Jun 2017 | 17,981 | 44.3 | 35.3 | 7.9 | 4.3 | 2.0 | 9.5 |

| Actual Result | 08 Jun 2017 | 43.5 | 41.0 | 7.6 | 1.9 | 1.7 | 0.0 |

There was a variety of values shown by the pollsters. Some were closer to the true result than others. Survation did particularly well with a poll showing a Conservative lead of 1pc, and Kantar Public who had a lead of 5pc. The other pollsters showed leads between 7pc and 12pc. The actual Conservative lead was 2.5pc, compared with the average over all the pollsters of 6.8pc. The Electoral Calculus adjustment for poll bias made our calculations worse, since the poll error was in favour of the Conservatives for the first time in many years.

The overall poll error in the Conservative lead was over 4pc. This is better than 2015 when the equivalent error was more than 6pc, and better also than 2001 and the bad error in 1992. But it was worse than in 1997, 2005 and 2010. So it was around the middle of the distribution of polling errors. The novelty this year was that the error overstated the Conservatives rather than, as is traditional, understating them.

In previous years, Electoral Calculus has also used spread betting market prices from Sporting Index. This year these were not used. On the eve of polling day, the seats market from Sporting Index showed the Conservatives with 363 seats and Labour with 205. This was less accurate even than the polls, so no accuracy was lost by the decision not to use it.

Although poll error was the largest error, there were other errors. The main other errors were:

The table shows the effect of the errors as they are removed one by one:

| Scenario | CON | LAB | LIB | SNP | Plaid | Error Count |

|---|---|---|---|---|---|---|

| Electoral Calculus Final prediction | 359 | 218 | 3 | 48 | 3 | 108 |

| Without bias correction | 352 | 226 | 3 | 48 | 2 | 94 |

| Without poll error, bias | 336 | 251 | 4 | 39 | 1 | 44 |

| Without EU Ref Model, poll error, bias | 327 | 257 | 6 | 39 | 3 | 26 |

| With Tactical Voting, without EU/poll/bias | 317 | 263 | 15 | 35 | 2 | 8 |

| Actual Election Result | 318 | 262 | 12 | 35 | 4 | 0 |

Here "Error Count" is defined as the sum over the parties of the absolute difference between predicted and actual seats won. This is a larger measure than the number of seats mis-predicted.

We see that the largest reduction in seat error comes from the poll error which contributes a score of 50 out of the total error of 108. The bias correction was worth 14, the problematic EU Referendum model was worth 18, and the absence of tactical voting was worth a further 18. The residual model error produced an error score of 8, equivalent to a one seat error in predicting the Conservatives and Labour. That residual error is very acceptable and is well within the normal expected bounds for model error.

Even given all the corrections above, not every seat is predicted correctly. If we use the corrected parameters (removing the bias correction, fixing the pollster errors, remove the EU model and enable tactical voting) then we get the following result:

| Measure | CON | LAB | LIB | UKIP | Green | SNP | Plaid | N.Ire |

|---|---|---|---|---|---|---|---|---|

| Predicted | 317 | 263 | 15 | 0 | 0 | 35 | 2 | 18 |

| Predicted − Actual | −1 | +1 | +3 | 0 | −1 | 0 | −2 | 0 |

This is a much more accurate result overall, but the prediction is still inexact. In terms of individual seats, fifty seats are wrongly predicted, which is a only a fair result. In 2015 only 36 seats were mispredicted and in 2010 there were 63 mis-predicted seats. This suggests that the 2017 election had relatively high local variation, in that many seats deviated from the national trend and were either more Conservative or less Conservative than the model suggested.

| Num | Seat Name | GE2015 | Prediction | GE2017 | Area | Swing | Comment |

|---|---|---|---|---|---|---|---|

| 1 | Ipswich | CON-08 | CON-02 | LAB-02 | Anglia | 4 | Marginal |

| 2 | Thurrock | CON-01 | LAB-03 | CON-01 | Anglia | 4 | Marginal |

| 3 | Waveney | CON-05 | LAB-02 | CON-17 | Anglia | 19 | |

| 4 | High Peak | CON-10 | CON-05 | LAB-04 | East Midlands | 9 | |

| 5 | Derbyshire North East | LAB-04 | LAB-09 | CON-06 | East Midlands | 15 | |

| 6 | Mansfield | LAB-11 | LAB-17 | CON-02 | East Midlands | 19 | |

| 7 | Battersea | CON-16 | CON-13 | LAB-04 | London | 17 | Anti-CON London |

| 8 | Enfield Southgate | CON-10 | CON-08 | LAB-09 | London | 17 | Anti-CON London |

| 9 | Kensington | CON-21 | CON-17 | LAB-00 | London | 17 | Anti-CON London |

| 10 | Middlesbrough South and Cleveland East | LAB-05 | LAB-07 | CON-02 | North East | 9 | |

| 11 | Stockton South | CON-10 | CON-07 | LAB-02 | North East | 9 | |

| 12 | Bolton West | CON-02 | LAB-01 | CON-02 | North West | 3 | Marginal |

| 13 | Crewe and Nantwich | CON-07 | CON-05 | LAB-00 | North West | 5 | Marginal |

| 14 | Warrington South | CON-05 | CON-01 | LAB-04 | North West | 5 | Marginal |

| 15 | Southport | LIB-03 | LAB-05 | CON-06 | North West | 11 | |

| 16 | Copeland | LAB-06 | LAB-10 | CON-04 | North West | 14 | By-election memory |

| 17 | Fife North East | NAT-10 | LIB-00 | NAT-00 | Scotland | 0 | Marginal |

| 18 | Gordon | NAT-15 | LIB-00 | CON-05 | Scotland | 5 | Marginal |

| 19 | Kirkcaldy and Cowdenbeath | NAT-19 | NAT-01 | LAB-01 | Scotland | 2 | Marginal |

| 20 | Midlothian | NAT-20 | NAT-02 | LAB-02 | Scotland | 4 | Marginal |

| 21 | Ayr Carrick and Cumnock | NAT-22 | NAT-03 | CON-06 | Scotland | 9 | Scottish volatility |

| 22 | Coatbridge, Chryston and Bellshill | NAT-23 | NAT-06 | LAB-04 | Scotland | 10 | Scottish volatility |

| 23 | Edinburgh North and Leith | NAT-10 | LAB-04 | NAT-03 | Scotland | 7 | Scottish volatility |

| 24 | Edinburgh South West | NAT-16 | CON-04 | NAT-02 | Scotland | 6 | Scottish volatility |

| 25 | Glasgow North East | NAT-24 | NAT-08 | LAB-01 | Scotland | 9 | Scottish volatility |

| 26 | Paisley and Renfrewshire South | NAT-12 | LAB-05 | NAT-06 | Scotland | 11 | Scottish volatility |

| 27 | Perth and North Perthshire | NAT-18 | CON-10 | NAT-00 | Scotland | 10 | Scottish volatility |

| 28 | Banff and Buchan | NAT-31 | NAT-07 | CON-09 | Scotland | 16 | Scottish volatility |

| 29 | Renfrewshire East | NAT-07 | LAB-03 | CON-09 | Scotland | 12 | Scottish volatility |

| 30 | Ross Skye and Lochaber | NAT-12 | LIB-02 | NAT-15 | Scotland | 17 | Scottish volatility |

| 31 | Canterbury | CON-18 | CON-05 | LAB-00 | South East | 5 | Marginal |

| 32 | Hastings and Rye | CON-09 | LAB-00 | CON-01 | South East | 1 | Marginal |

| 33 | Southampton Itchen | CON-05 | LAB-05 | CON-00 | South East | 5 | Marginal |

| 34 | Reading East | CON-13 | CON-04 | LAB-07 | South East | 11 | |

| 35 | Brighton Pavilion | Green-15 | LAB-14 | Green-25 | South East | 39 | Incumbency |

| 36 | Lewes | CON-02 | LIB-03 | CON-10 | South East | 13 | |

| 37 | Oxford West and Abingdon | CON-17 | CON-11 | LIB-01 | South East | 12 | |

| 38 | Stroud | CON-08 | CON-01 | LAB-01 | South West | 2 | Marginal |

| 39 | Bristol North West | CON-10 | CON-01 | LAB-09 | South West | 10 | |

| 40 | Bath | CON-08 | CON-05 | LIB-11 | South West | 16 | |

| 41 | Plymouth Moor View | CON-02 | LAB-09 | CON-11 | South West | 20 | |

| 42 | Carmarthen East and Dinefwr | NAT-14 | LAB-00 | NAT-10 | Wales | 10 | West-Wales Plaid strength |

| 43 | Ceredigion | LIB-08 | LIB-16 | NAT-00 | Wales | 16 | West-Wales Plaid strength |

| 44 | Telford | CON-02 | LAB-01 | CON-02 | West Midlands | 3 | Marginal |

| 45 | Warwick and Leamington | CON-13 | CON-09 | LAB-02 | West Midlands | 11 | |

| 46 | Stoke-on-Trent South | LAB-06 | LAB-10 | CON-02 | West Midlands | 12 | |

| 47 | Walsall North | LAB-05 | LAB-10 | CON-07 | West Midlands | 17 | |

| 48 | Colne Valley | CON-09 | CON-03 | LAB-02 | Yorks/Humber | 5 | Marginal |

| 49 | Keighley | CON-06 | CON-01 | LAB-00 | Yorks/Humber | 1 | Marginal |

| 50 | Morley and Outwood | CON-01 | LAB-06 | CON-04 | Yorks/Humber | 10 | Ed Balls memory |

[Note the use of Slide-O-Meter notation of "CON-03" to mean a Conservative majority of 3% which is used in this table. Majorities are rounded to the nearest integer percentage, so "CON-00" means a majority of less than 0.5%.]

There are a number of stories here. In outline they are: